Automating Deep Learning: A Guide to Neural Architecture Search (NAS) Strategies

Explore primary search strategies of NAS and its practical applications to optimizing complex architectures

By Kuriko IWAI

Table of Contents

IntroductionWhat is Neural Architecture Search (NAS)?Introduction

Deep learning has revolutionized technology, from image recognition and natural language processing to drug discovery, leveraging its complex neural network architectures designed by many domain experts with countless experimentations.

However, the reliance on human ingenuity presents a significant bottleneck.

Neural Architecture Search (NAS) can automate the resource-heavy process to find the optimal architecture design.

In this article, I’ll explore its core formation and practical applications using three search strategies:

Reinforcement learning,

Evolutionary Algorithms, and

Gradient-based methods.

Building production-grade AI systems?

I help teams design and deploy scalable RAG pipelines, LLM systems, and MLOps infrastructure.

Or explore:

- Dive deeper 👉 Research Archive

- Learn by building 👉 AI Engineering Masterclass

- Try it live 👉 Playground

What is Neural Architecture Search (NAS)?

Neural Architecture Search (NAS) is an automated architect for neural networks, treating network design process as an optimization problem.

Technically, instead of a human sketching out a network layer by layer, a NAS algorithm explores thousands of potential options, testing each one to find the most effective design.

The below diagram showcases a general framework of NAS:

Kernel Labs | Kuriko IWAI | kuriko-iwai.com

Figure A. General framework of NAS and common search strategies (Created by Kuriko IWAI)

◼ The Goal: Minimizing the Validation Loss

The core of the optimization problem of NAS is a bi-level optimization.

Given a dataset D and a performance metric M, the goal of NAS is to find an optimal architecture α∗∈A that minimizes the validation loss:

where

A is the architecture search space, and

w∗(α) represents the optimal weights for a given architecture α, which are found by training the network on the training set:

This formulation highlights the two levels of the optimization:

The inner loop is the standard training of a given network described in the formula (2), and

The outer loop is the search for the best architecture itself described in the formula (1).

The challenge lies in the latter part, which is a discrete optimization problem for NAS.

How Neural Architecture Search Works

In NAS, we take three steps to find the optimal architecture:

◼ Step 1. Defining the Search Space

The first step is to define the search space that includes the set of all possible neural network architectures that the algorithm can construct, like convolutional layers, recurrent cells, or attention mechanisms, as well as the rules for how they can be connected.

For example, a search space might allow for networks with 5 to 15 layers, with each layer being a choice between a 3x3 convolution and a 5x5 convolution.

Note: Difference from hyperparameter tuning

Hyperparameter tuning optimizes the settings like the learning rate, batch size, or the specific optimizer used, in a fixed network structure*.*

The architecture itself stays the same like a network with 2 hidden layers.

NAS, on the other hand, optimizes the structure of the network itself, including the number of layers, the number of neurons in each layer, the type of layers (e.g., convolutional vs. pooling), and how they are connected.

◼ Step 2. Selecting a Search Strategy

The search strategy refers to the algorithm that navigates the search space to find the best-performing architecture. Common strategies include:

Reinforcement Learning (RL):

RL makes the process of building the optimal network a sequential task where an agent learns to construct a network, receiving a reward based on the network's performance.

Learn More: Deep Reinforcement Learning for Self-Evolving AI

Best When:

Complex and discrete search space: RL agents are good at navigating large, non-differentiable search spaces, where a simple gradient-based approach wouldn't work.

Optimizing multiple objectives simultaneously: Design a reward function that incorporates multiple factors like accuracy, latency, and model size, allowing the agent to find a balanced architecture.

Can train a controller on a large dataset: Controller can be a powerful tool for learning generalizable architectural patterns if a sufficiently large amount of data is available to train on.

Evolutionary Algorithms (EA):

EAs mimic natural selection to evolve a population of network architectures. Architectures are treated as "species" that "mutate" and "crossover" to create new, potentially better, offspring.

Best When:

Extremely broad search space: EAs are less likely to get stuck in local optima, great at handling broad search space.

Large-scale computing clusters: The independent nature of evaluating each "individual" in the population enables EAs to run each evaluation in parallel.

Multi-objective optimization: EAs finds a set of non-dominated solutions (a Pareto front) when optimizing for multiple competing objectives, such as maximizing accuracy while minimizing model size.

Gradient-based Methods (e.g., DARTS)

This approach uses a simple gradient descent in continuous search space.

Best When:

Computational efficiency is critical: Gradient-based methods like Differentiable Architecture Search (DARTS) are generally the fastest and most memory-efficient NAS techniques.

Well-defined and small search space: When the number of possible combinations is limited, the continuous approximation of the search space is more accurate.

A single, optimized architecture : Unlike EAs or RL, gradient-based methods directly optimize for a single, final architecture, making them ideal for quick prototyping and deployment.

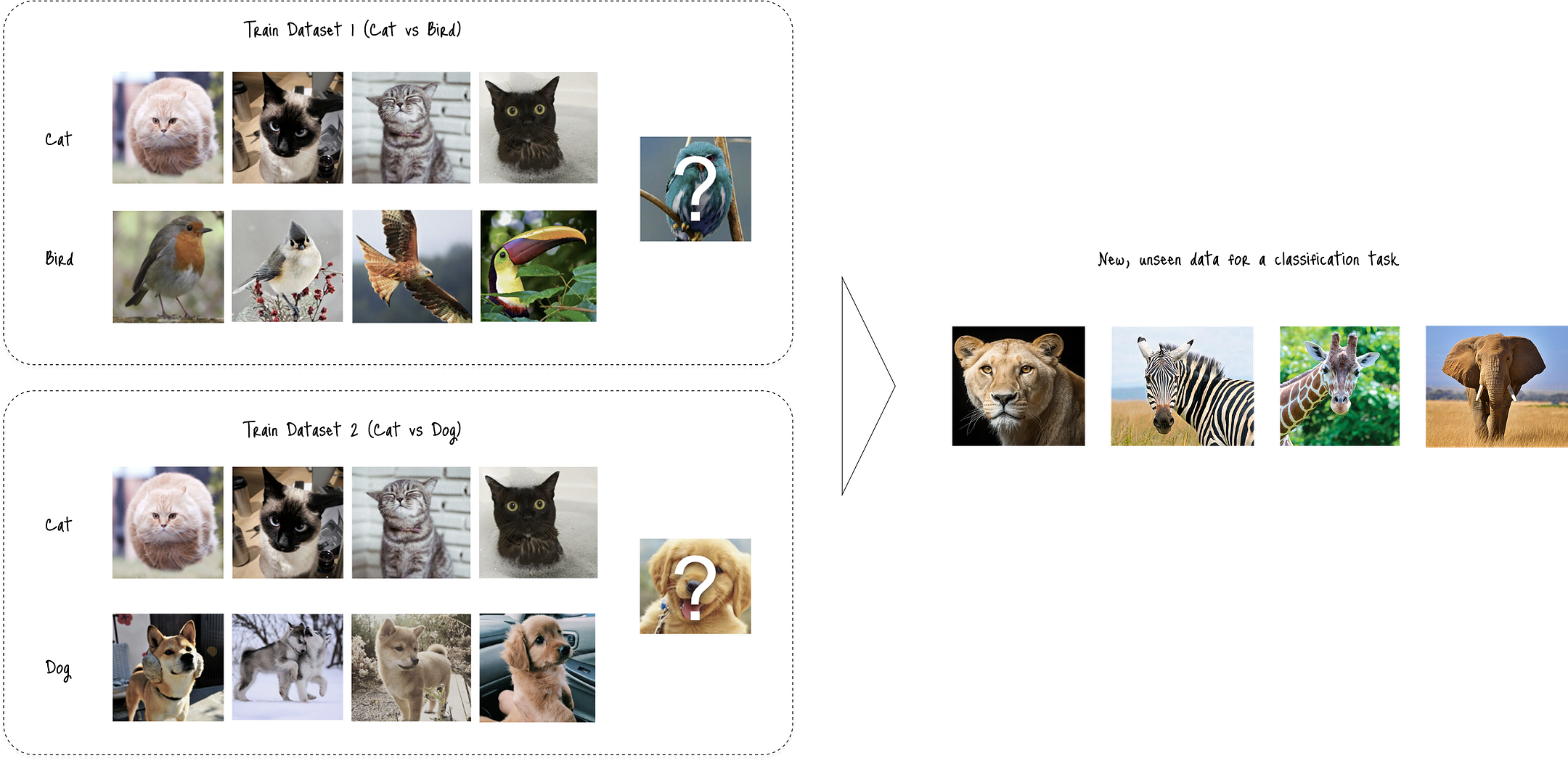

◼ Step 3. Evaluating Performance

Lastly, the algorithm evaluates the quality of a candidate architecture based on its validation loss.

Since fully training every single candidate is computationally infeasible, various techniques are used to speed up this process, such as training on a smaller subset of data, using a performance predictor model, or employing weight sharing across different architectures.

Building production-grade AI systems?

I help teams design and deploy scalable RAG pipelines, LLM systems, and MLOps infrastructure.

Or explore:

- Dive deeper 👉 Research Archive

- Learn by building 👉 AI Engineering Masterclass

- Try it live 👉 Playground

Why Neural Architecture Search

As we learned NAS’s optimization problem, manually solving the problem - searching the optimal architecture that meets the formula (1) - is extremely tedious.

It relies heavily on human intuition, countless hours of experimentation, and deep domain expertise, which can be a significant bottleneck in the model design process.

NAS automates this most challenging part, allowing human to focus on the problem at hand.

◼ Advantages of NAS

The automation enables NAS to archive two distinct advantages:

Surpassing human-designed architectures

The optimal architecture that NAS found outperforms human-designed architectures.

For example, NAS-found models like AmoebaNet and ENAS have consistently achieved state-of-the-art performance on major benchmarks, outperforming architectures that took years of human effort to design.

Finding more efficient architecture

NAS can find not just the most accurate, but also the most efficient architectures—those with fewer parameters, lower latency, or less memory usage.

This enable NAS suitable for deployment on mobile devices.

◼ Beyond Traditional Architectures

Overcoming the limitations of manual architecture search, NAS has been extended to other aspects of the machine learning pipeline:

Search for Loss Functions: Instead of relying on a predefined loss function (e.g., cross-entropy), NAS can find a more effective loss function tailored to the dataset and task.

Optimize Hyperparameters: NAS can jointly search for an optimal network architecture and its associated hyperparameters.

Search for Data Augmentation Policies: Instead of manually selecting data transformations (e.g., flips, rotations), NAS can find an optimal sequence of augmentations for a specific dataset.

Design Hardware-Efficient Models: By incorporating hardware metrics like latency and power consumption into the search objective, NAS can design models that are specifically optimized for deployment on resource-constrained devices like smartphones and IoT sensors.

Improve Generative Models: NAS can find better architectures for both the generator and discriminator networks in Generative Adversarial Networks (GANs), leading to more stable training and higher-quality generated content.

◼ Real-World Use Cases

Leveraging versatile advantages, NAS has many use cases like:

Computer Vision: Discovering specialized architecture or loss functions for tasks like object detection with tiny objects, semantic segmentation with highly imbalanced classes, or image super-resolution with unique perceptual requirements.

Natural Language Processing: Finding architectures that better handle specific linguistic challenges, such as rare words, long-range dependencies, or subtle sentiment nuances.

Medical Imaging: Generating loss functions that are highly sensitive to subtle anomalies in medical scans, which is critical for early disease detection.

Simulation

Now, I’ll demonstrate the three search strategies to compare the results of the optimal architecture, taking a Recurring Neural Network (RNN) for an example.

Any architecture can be optimized, but complex ones can particularly benefit from NAS.

◼ Defining the Search Space (Step 1)

The first step is to define the search space.

A NAS algorithm needs a set of rules to follow to deliver performance. For example:

Allowed Building Blocks: We specify which layer types (e.g., dense, convolutional), activation functions, and optimizers the algorithm is allowed to use.

Connectivity Rules: We define how these layers can be connected (e.g., must be a sequential chain, can have skip connections, etc.).

Ranges of Values: We set the bounds for things like the number of layers, the number of neurons per layer, or the dropout rate.

The search space dictates these rules on top of the problem size, balancing flexibility and constraints:

flexible enough to contains novel, high-performing architectures, but

constrained enough to be computationally tractable.

I defined the search_space dictionary that demonstrates this principle, while providing the algorithm with a richer set of building blocks to choose from.

The NAS algorithm simultaneously tunes both the architecture's structure (num_hidden_layers, hidden_layer_size) and a hyperparameter (learning_rate) to find the best overall combination.

1import torch.nn as nn

2import torch.optim as optim

3

4search_space = {

5 # architecture's structure

6 'num_hidden_layers': [1, 2, 3, 4, 5],

7 'hidden_layer_size': [32, 64, 128, 256, 512],

8 'activation_function': ['ReLU', 'LeakyReLU', 'Tanh'],

9

10 # hyperparameters

11 'learning_rate': [0.1, 0.01, 0.001, 0.0001],

12 'optimizer': ['Adam', 'SGD', 'RMSprop'],

13 'dropout_rate': [0.0, 0.2, 0.4, 0.6]

14}

15

16activation_map = {

17 'ReLU': nn.ReLU,

18 'LeakyReLU': nn.LeakyReLU,

19 'Tanh': nn.Tanh

20}

21

22optimizer_map = {

23 'Adam': optim.Adam,

24 'SGD': optim.SGD,

25 'RMSprop': optim.RMSprop

26}

27◼ Evaluating Performance (Step 3)

For the sake of operation, I defined the evaluate_architecture function first and use it in the search strategy function later.

The function takes a complete blueprint (both the structure and the hyperparameters) and return a score, allowing the search strategy to determine which blueprints are optimal.

1import torch

2

3def evaluate_architecture(architecture, X_train, y_train, X_val, y_val, num_epochs=50):

4 # initialize model, optimizer, and loss function

5 model = build_model(architecture)

6 criterion = nn.MSELoss()

7

8 optimizer_class = optimizer_map[architecture['optimizer']]

9 optimizer = optimizer_class(model.parameters(), lr=architecture['learning_rate'])

10

11 # train the model

12 model.train()

13 for _ in range(num_epochs):

14 optimizer.zero_grad()

15 outputs = model(X_train)

16 loss = criterion(outputs, y_train)

17 loss.backward()

18 optimizer.step()

19

20 # validate modelusing validation dataset

21 model.eval()

22 with torch.no_grad():

23 val_outputs = model(X_val)

24 val_loss = criterion(val_outputs, y_val)

25

26 return val_loss.item()

27◼ Implementing Search Strategy (Step 2)

Next, we’ll choose and implement a search strategy. For demonstration, I’ll use all three strategies and compare performance.

▫ 1. Reinforcement Learning

First, I defined the ArchitectureController class that is used in the RL loop:

1import torch.nn as nn

2

3class ArchitectureController(nn.Module):

4 def __init__(self, search_space):

5 super(ArchitectureController, self).__init__()

6 self.search_space = search_space

7 self.keys = list(search_space.keys())

8 self.vocab_size = [len(search_space[key]) for key in self.keys]

9 self.num_actions = len(self.keys)

10 self.rnn = nn.RNN(input_size=1, hidden_size=64, num_layers=1)

11 self.policy_heads = nn.ModuleList([nn.Linear(64, vs) for vs in self.vocab_size])

12

13 def forward(self, input, hidden):

14 output, hidden = self.rnn(input, hidden)

15 logits = [head(output.squeeze(0)) for head in self.policy_heads]

16 return logits, hidden

17Then, defined the run_rl_search function to handle the search process:

1import torch

2

3def run_rl_search(

4 search_space, X_train, y_train, X_val, y_val, num_epochs=10, num_episodes=5

5 ):

6 # initiate controller using the ArchitectureController class

7 controller = ArchitectureController(search_space)

8 controller_optimizer = optim.Adam(controller.parameters(), lr=0.01)

9

10 # start search

11 best_loss = float('inf')

12 best_architecture = None

13 for episode in range(num_episodes):

14 # zero the gradient

15 controller_optimizer.zero_grad()

16

17 # rnn expects the input shape of (batch_size, timesteps, features)

18 hidden = torch.zeros(1, 1, 64)

19

20

21 # initializes a list/dict to store the log probabilities and architectural choices

22 log_probs = []

23 architecture = {}

24

25 # test architectual choices

26 for i, key in enumerate(controller.keys):

27 # perform controller

28 logits, hidden = controller(torch.zeros(1, 1, 1), hidden)

29

30 # create a categorical distribution for the current architectural choice.

31 dist = torch.distributions.Categorical(logits=logits[i])

32

33 # samples an action from dist

34 action_index = dist.sample()

35

36 # stores chosen architectural values and log probability

37 architecture[key] = search_space[key][action_index.item()]

38 log_probs.append(dist.log_prob(action_index))

39

40 # compute validation loss

41 val_loss = evaluate_architecture(architecture, X_train, y_train, X_val, y_val, num_epochs=num_epochs)

42

43 # update the optimal architecture choice

44 reward = -val_loss

45 policy_loss = torch.sum(torch.stack(log_probs) * -reward)

46 policy_loss.backward()

47 controller_optimizer.step()

48

49 if val_loss < best_loss:

50 best_loss = val_loss

51 best_architecture = architecture

52

53 return best_architecture, best_loss

54▫ 2. Evolutionary Algorithms (EA)

I defined the run_evolutionary_search function to generate competitive architectures in every search in 10 population with five generations (set of architectures):

1import random

2from copy import deepcopy

3

4def run_evolutionary_search(X, y, search_space, population_size=10, num_generations=5):

5 best_loss = float('inf')

6 best_architecture = None

7

8 # create train and validation datasets

9 split_idx = int(len(X) * 0.8)

10 X_train, X_val = X[:split_idx], X[split_idx:]

11 y_train, y_val = y[:split_idx], y[split_idx:]

12

13 # start search with a population with 5 generations

14 population = []

15 for _ in range(population_size):

16 # randomly choose the architecture to test

17 architecture = {key: random.choice(search_space[key]) for key in search_space}

18 population.append(architecture)

19

20 # iterate all generations (set of architecture options)

21 for _ in range(num_generations):

22 fitness = []

23 for arch in population:

24 loss = evaluate_architecture(arch, X_train, y_train, X_val, y_val, num_epochs=10)

25 fitness.append((loss, arch))

26

27 if loss < best_loss:

28 best_loss = loss

29 best_architecture = arch

30

31 # create new population by choosing the 'elites' (high performing architectures) from the generation

32 fitness.sort(key=lambda x: x[0])

33 new_population = []

34 num_elites = population_size // 2

35 elites = [arch for loss, arch in fitness[:num_elites]]

36 new_population.extend(elites)

37

38 # create and mutate offspring from the new population

39 while len(new_population) < population_size:

40 parent1 = random.choice(elites)

41 parent2 = random.choice(elites)

42

43 child = deepcopy({})

44 for key in parent1: child[key] = random.choice([parent1[key], parent2[key]])

45 mutation_key = random.choice(list(search_space.keys()))

46 child[mutation_key] = random.choice(search_space[mutation_key])

47 new_population.append(child)

48

49 population = new_population

50

51 return best_architecture, best_loss

52▫ 3. Gradient-Based Methods

For gradient-based method, first defined a simple multi-layered neural network:

1import torch.nn as nn

2

3# define cell

4class Cell(nn.Module):

5 def __init__(self, in_features, out_features, ops):

6 super(Cell, self).__init__()

7 self.ops = nn.ModuleList([

8 nn.Sequential(nn.Linear(in_features, out_features), op()) for op in ops

9 ])

10

11 def forward(self, x, weights):

12 return sum(w * op(x) for w, op in zip(weights, self.ops))

13

14

15class Model(nn.Module):

16 def __init__(self, search_space):

17 super(Model, self).__init__()

18 self.ops_list = [activation_map[name] for name in search_space['activation_function']]

19 self.num_ops = len(self.ops_list)

20 self.num_hidden_layers = max(search_space['num_hidden_layers'])

21 self.hidden_layer_size = search_space['hidden_layer_size'][0]

22 self.alphas = nn.Parameter(torch.randn(self.num_hidden_layers, self.num_ops, requires_grad=True))

23 self.layers = nn.ModuleList()

24 self.layers.append(nn.Linear(1, self.hidden_layer_size))

25 for _ in range(self.num_hidden_layers - 1):

26 self.layers.append(Cell(self.hidden_layer_size, self.hidden_layer_size, self.ops_list))

27 self.output_layer = nn.Linear(self.hidden_layer_size, 1)

28

29 def forward(self, x):

30 architecture_weights = nn.functional.softmax(self.alphas, dim=-1)

31 output = x

32 for i, layer in enumerate(self.layers):

33 if isinstance(layer, nn.Linear):

34 output = layer(output)

35 elif isinstance(layer, Cell):

36 output = layer(output, architecture_weights[i-1])

37 return self.output_layer(output)

38

39 def discretize(self):

40 architecture = {

41 'num_hidden_layers': self.num_hidden_layers,

42 'hidden_layer_size': self.hidden_layer_size,

43 'learning_rate': 0.001,

44 'optimizer': 'Adam',

45 'dropout_rate': 0.0

46 }

47 best_op_indices = self.alphas.argmax(dim=-1)

48 best_ops = [self.ops_list[i].__name__ for i in best_op_indices]

49 architecture['activation_function'] = best_ops[0]

50 return architecture

51Then, defined the run_gradient_based_search function:

1import torch.nn as nn

2import torch.optim as optim

3

4def run_gradient_based_search(search_space, X_train, y_train, X_val, y_val, num_epochs=50):

5 # define model, loss function, and optimizers

6 model = Model(search_space)

7 criterion = nn.MSELoss()

8

9 arch_params = [model.alphas]

10 optimizer_alpha = optim.Adam(arch_params, lr=0.001)

11

12 arch_param_ids = {id(p) for p in arch_params}

13 weight_params = [p for p in model.parameters() if p.requires_grad and id(p) not in arch_param_ids]

14 optimizer_w = optim.Adam(weight_params, lr=0.01)

15

16 # start to search

17 for epoch in range(num_epochs):

18 # zero the gradients

19 optimizer_w.zero_grad()

20

21 # forward pass

22 outputs = model(X_train)

23

24 # optimization

25 loss_w = criterion(outputs, y_train)

26 loss_w.backward()

27 optimizer_w.step()

28

29 # backward pass

30 optimizer_alpha.zero_grad()

31 val_outputs = model(X_val)

32 loss_alpha = criterion(val_outputs, y_val)

33 loss_alpha.backward()

34 optimizer_alpha.step()

35

36 best_architecture = model.discretize()

37 final_loss = evaluate_architecture(best_architecture, X_train, y_train, X_val, y_val, num_epochs=50)

38

39 return best_architecture, final_loss

40◼ Results

The Evolutionary Algorithms (EA) approach was the most effective method for finding an optimal architecture, achieving the lowest best validation MSE of 0.1498.

▫ 1. Reinforcement Learning (RL)

The RL method found a best validation MSE of 0.2744. The loss and reward values varied significantly over five episodes, with the best result occurring in the final episode.

Ran five episodes:

Episode 1: Loss = 1.1483, Reward = -1.1483

Episode 2: Loss = 3.2017, Reward = -3.2017

Episode 3: Loss = 4.0062, Reward = -4.0062

Episode 4: Loss = 2.5762, Reward = -2.5762

Episode 5: Loss = 0.2744, Reward = -0.2744

Best Architecture Found:

Num Hidden Layers: 4

Hidden Layer Size: 64

Activation Function: Tanh

Learning Rate: 0.1

Optimizer: RMSprop

Dropout Rate: 0.2

Best Validation MSE: 0.2744

\==================================================

▫ 2. Evolutionary Algorithms (EA)

EA successfully minimized the loss over five generations, resulting in the best overall validation MSE of 0.1498. This architecture was found in the second generation of the search.

Searched with a population of ten for five generations:

Generation 1/5 --- Best loss in this generation: 0.4558

Generation 2/5 --- Best loss in this generation: 0.1498

Generation 3/5 --- Best loss in this generation: 0.3062

Generation 4/5 --- Best loss in this generation: 0.4200

Generation 5/5 --- Best loss in this generation: 0.3125

Best Architecture Found:

Num Hidden Layers: 5

Hidden Layer Size: 512

Activation Function: Tanh

Learning Rate: 0.1

Optimizer: SGD

Dropout Rate: 0.2

Best Validation MSE: 0.1498

\==================================================

▫ 3. Gradient-based Methods

This approach performed the worst, despite being run for 50 epochs. It resulted in the highest best validation MSE of 3.6725.

Searched with 50 epochs:

Epoch 10/50: Train Loss = 0.0938, Arch Loss = 2.1598

Epoch 20/50: Train Loss = 0.0509, Arch Loss = 1.6185

Epoch 30/50: Train Loss = 0.0338, Arch Loss = 1.7296

Epoch 40/50: Train Loss = 0.0184, Arch Loss = 0.4939

Epoch 50/50: Train Loss = 0.0114, Arch Loss = 0.2417

Best Architecture Found:

Num Hidden Layers: 5

Hidden Layer Size: 32

Activation Function: LeakyReLU

Learning Rate: 0.001

Optimizer: Adam

Dropout Rate: 0.0

Best Validation MSE: 3.6725

Building production-grade AI systems?

I help teams design and deploy scalable RAG pipelines, LLM systems, and MLOps infrastructure.

Or explore:

- Dive deeper 👉 Research Archive

- Learn by building 👉 AI Engineering Masterclass

- Try it live 👉 Playground

Wrapping Up

Neural Architecture Search (NAS) enables us to find the best performing architecture design with minimal efforts.

In our experiments, we observed how search strategies like Reinforcement Learning or Evolutional Algorithms incorporated in the NAS approach help find the optimal architecture quickly and efficiently.

Despite being a powerful method for designing high-performing architecture, traditional NAS faces two main challenges:

High computational cost: Early methods required thousands of GPU hours, making them expensive and slow.

Lack of generalization: Architectures are often optimized for a single task, meaning a new, costly search is needed for each different problem.

However, NAS remains a key approach because it can discover superior, novel architectures that outperform human-designed ones, particularly for tasks where state-of-the-art performance is critical.

The field is constantly evolving to develop more efficient algorithms and extend the NAS framework to solve new problems.

Written by Kuriko IWAI. All images, unless otherwise noted, are by the author. All experimentations on this blog utilize synthetic or licensed data.

Building production-grade AI systems?

I help teams design and deploy scalable RAG pipelines, LLM systems, and MLOps infrastructure.

Or explore:

- Dive deeper 👉 Research Archive

- Learn by building 👉 AI Engineering Masterclass

- Try it live 👉 Playground

Share What You Learned

Kuriko IWAI, "Automating Deep Learning: A Guide to Neural Architecture Search (NAS) Strategies" in Kernel Labs

https://kuriko-iwai.com/introduction-to-neural-architecture-search

Continue Your Learning

If you enjoyed this blog, these related entries will complete the picture:

Scaling Generalization: Automating Flexible AI with Meta-Learning and NAS

A Comparative Guide to Hyperparameter Optimization Strategies

Optimizing LSTMs with Hyperband: A Comparative Guide to Bandit-Based Tuning

The Definitive Guide to Machine Learning Loss Functions: From Theory to Implementation

Related Books for Further Understanding

These books cover the wide range of theories and practices; from fundamentals to PhD level.

Linear Algebra Done Right

Foundations of Machine Learning, second edition (Adaptive Computation and Machine Learning series)

Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems

Machine Learning Design Patterns: Solutions to Common Challenges in Data Preparation, Model Building, and MLOps